Kafka API essentials

The Nuance Kafka Consumer API uses the standard Kafka concepts and terminology. This section assumes that you have a basic understanding of Kafka.

In particular, the Kakfa Consumer API uses the following concepts.

Messages/events

A Kafka message corresponds to an event that occurred in Mix. There are two main types of Mix events:

- Runtime API events: These events are related to the ASRaaS, NLUaaS, DLGaaS, and TTSaaS API transactions (for example, a DLGaaS StartRequest). As such, these messages are organized around the service requests and responses.

- Dialog log events: These events are related to the behavior of the dialog application (for example, the dialog was started, input was received, a transition occurred, and so on) and are used for reporting. These messages are organized around the dialog events.

Each message has a key that is composed of the following two fields:

| Field | Description |

|---|---|

| service | Name of the runtime service that generated this message. Valid values are: TTSaaS, ASRaaS, NLUaaS, DLGaaS, Fabric. |

| id | Randomly-generated UUID that uniquely identifies the message. |

Topics

Kafka messages are organized into topics. In Mix, topic names correspond to app IDs. Therefore, a Mix topic stores messages for a specific app ID. For example:

"topic": "DEMO-OMNICHANNEL-APP-DEV"

Partitions

Kafka topics are divided into partitions.

Topics and partitions are created by Nuance. To get the list of partitions for a topic, use the Get Partitions endpoint.

This endpoint returns the topic name and partition numbers that correspond to the client credentials used when creating the access token. For example:

{

"topic": "DEMO-OMNICHANNEL-APP-DEV",

"partitions": [

0

]

}

Consumers

Consumers pull the messages from topics. You create your own consumers using the Create Consumer endpoint.

In Mix, consumer names follow this nomenclature:

consumer-UUIDv4

Where UUIDv4 is a Version 4 UUID. For example:

consumer-9872ec64-50d9-4dcd-9f4f-e8b5099a7f4f

Consumer groups

A consumer group is a set of consumers that cooperate to consume data from topics. Partitions are assigned to a consumer group. Each consumer in a consumer group receives messages from a different subset of the assigned partitions.

You create your own consumer groups using the Create Consumer endpoint.

In Mix, consumer group names follow this nomenclature:

appID-appID/topic-clientName-clientName-two-digit number

Where:

- appID/topic: Name of the topic; in Mix, this is the app ID name.

- clientName: Name of one of the clients defined for the app ID; this information is available from the Mix dashboard Manage tab. Nuance recommends that you create one client per organization that needs to access event logs for an APP ID. See Best practices.

- two-digit number: A two-digit number, starting at 00. See note below.

For example:

appID-DEMO-OMNICHANNEL-APP-DEV-clientName-default-00

Note:

By default, you can create five consumer groups per appID/clientName pair (00,01,02,03,04). If you need more, please contact your Nuance representative.Consuming messages

Consumers pull the messages from topics. A consumer must be assigned to one or more partitions to pull messages. There are two ways to assign partitions:

- By manually assigning the consumer to a specific partition using the Assign Partitions endpoint

- By subscribing to a topic using the Subscribe endpoint; in this case, consumers are assigned dynamically to the partitions of a topic

Note:

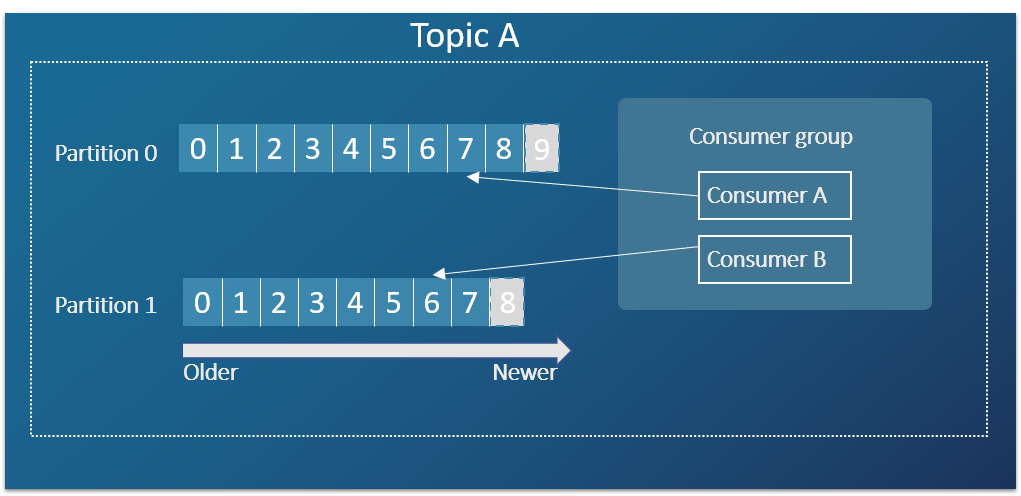

Use only one of the above methods.The following diagram summarizes some of the concepts covered so far. Topic A includes two partitions, and each partition stores messages as they are generated. Consumers are part of consumer groups and read the messages.

To determine which messages to pull, Kafka uses offsets.

Using offsets

Offsets specify a position in a partition and determine where a consumer should start pulling messages.

Each consumer tracks its position (that is, offset) in a partition. By default, when a consumer is created, it is set to start reading the newest message in a partition. For example, in the diagram above, a new consumer assigned to partition 0 will start reading the next message coming at offset 9.

Kafka uses the following terms when talking about offsets:

- The earliest offset, also called beginning offset, points to the first available offset in a partition.

- The latest offset, also called end offset, points to the last available offset in a partition.

- The current offset points to the last message that Kafka has sent to a consumer. The current offset ensures that the same records are not sent again to the same consumer.

- The committed offset points to the last record that a consumer has successfully processed. This can be set manually with the Commit Offsets endpoint or automatically by setting the

auto.commit.enableparameter to true when creating consumers.

Offsets are attached to a consumer group, so consumers should use the same consumer group to maintain the offsets.

Feedback

Was this page helpful?

Glad to hear it! Please tell us how we can improve.

Sorry to hear that. Please tell us how we can improve.