Client app development

The gRPC protocol lets you create a client application for recognizing speech. This topic describes how to implement the basic functionality of Nuance Recognizer in the context of a Python application.

Note:

For the complete application, see the sample Python application.Sequence flow

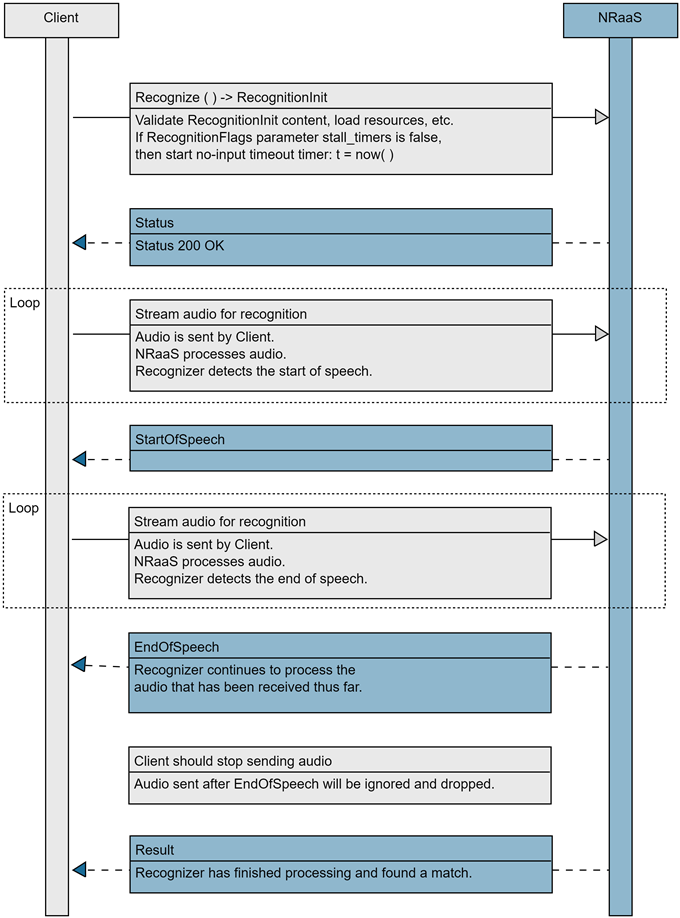

The essential tasks are illustrated in the following high-level sequence flow of a client application at runtime:

Authorize

Nuance Mix uses the OAuth 2.0 protocol for authorization. Your client application must provide an access token to be able to access the runtime service. The token expires after a short period of time so must be regenerated frequently.

Your client uses your client ID and secret from Mix (see Prerequisites from Mix) to generate an access token from the Nuance authorization server.

Your client application uses the client ID and secret from the Mix dashboard (see Prerequisites from Mix) to generate an access token from the Nuance authorization server.

The client ID starts with appID: followed by a unique identifier. If you are using the curl command, replace the colon with %3A so the value can be parsed correctly:

appID:NMDPTRIAL_your_name_company_com_2020...

-->

appID%3ANMDPTRIAL_your_name_company_com_2020...

The token may be generated in several ways, either as part of the client application or as a script file. The token may be generated in several ways, either as part of the client or separately.

In this example, a Linux shell script or Windows batch file generates a token, stores it in an environment variable, and passes it to the client. The script replaces the colons in the client ID with %3A so curl can parse the value correctly.

Note:

Thenr.api.nuance.com:443 URI calls NRaaS for the United States region and North America langauge group. When calling NRaaS, use the URI for your region and language group requirements.

#!/bin/bash

CLIENT_ID=<Mix client ID, starting with appID:>

SECRET=<Mix client secret>

#Change colons (:) to %3A in client ID

CLIENT_ID=${CLIENT_ID//:/%3A}

MY_TOKEN="`curl -s -u "$CLIENT_ID:$SECRET" \

"https://auth.crt.nuance.com/oauth2/token" \

-d "grant_type=client_credentials" -d "scope=asr nlu tts dlg nr" \

| python -c 'import sys, json; print(json.load(sys.stdin)["access_token"])'`"

python3 my-python-client.py nr.api.nuance.com:443 $MY_TOKEN $1@echo off

setlocal enabledelayedexpansion

set CLIENT_ID=<Mix client ID, starting with appID:>

set SECRET=<Mix client secret>

rem Change colons (:) to %3A in client ID

set CLIENT_ID=!CLIENT_ID::=%%3A!

set command=curl -s ^

-u %CLIENT_ID%:%SECRET% ^

-d "grant_type=client_credentials" -d "scope=asr nlu tts dlg nr" ^

https://auth.crt.nuance.com/oauth2/token

for /f "delims={}" %%a in ('%command%') do (

for /f "tokens=1 delims=:, " %%b in ("%%a") do set key=%%b

for /f "tokens=2 delims=:, " %%b in ("%%a") do set value=%%b

goto done:

)

:done

rem Remove quotes

set MY_TOKEN=!value:"=!

python my-python-client.py nr.api.nuance.com:443 %MY_TOKEN% %1The client uses the token to create a secure connection to the NRaaS service:

# Set arguments

hostaddr = sys.argv[1]

access_token = sys.argv[2]

audio_file = sys.argv[3]

# Create channel and stub

call_credentials = grpc.access_token_call_credentials(access_token)

ssl_credentials = grpc.ssl_channel_credentials()

channel_credentials = grpc.composite_channel_credentials(ssl_credentials, call_credentials)

#!/bin/bash

# Remember to change the colon (:) in your CLIENT_ID to code %3A

CLIENT_ID="appID%3ANMDPTRIAL_your_name_company_com_20201102T144327123022%3Ageo%3Aus%3AclientName%3Adefault"

SECRET="9L4l...8oda"

export MY_TOKEN="`curl -s -u "$CLIENT_ID:$SECRET" \

"https://auth.crt.nuance.com/oauth2/token" \

-d "grant_type=client_credentials" -d "scope=nr" \

| python -c 'import sys, json; print(json.load(sys.stdin)["access_token"])'`"

./my-python-client.py nr.api.nuance.com:443 $MY_TOKEN $1

Import functions

The application imports all functions from the NRC client stubs that you generated from the proto files in gRPC setup.

Do not edit these stub files.

Import functions from stubs:

from nrc_pb2 import *

from nrc_pb2_grpc import *

Set recognition parameters

The application sets a RecognitionInit message containing RecognitionParameters, or parameters that define the type of recognition you want. Consult your generated stubs for the precise parameter names. Some parameters are:

-

Audio format (mandatory): The codec of the audio, whether Mu-law, A-law, or PCM.

-

Result format: How the recognition result sent back to the client is formatted: Natural Language Semantics Markup Language (NLSML) or Extensible Multimodal Annotation Language (EMMA). This example sets the format as EMMA.

-

No input timeout: Specify how much audio silence would trigger a no-input timeout status response from the recognizer. This example tells the recognizer to return a no-input status response if more than two seconds of silence audio is received (and no speech has been detected so far).

For information on all the recognition parameters, see NRaaS gRPC API RecognitionParameters.

A RecognitionInit message must also include at least one resource such as builtin grammar, url grammar, or inline grammar. This example performs a recognition on the recognizer builtin grammar for recognizing digits (zero, one, two, three, up to nine).

Create RecognitionInit message and set recognition parameters:

def recognition_init():

init = RecognitionInit(

parameters = RecognitionParameters(

audio_format = AudioFormat(ulaw = ULaw()),

no_input_timeout_ms = 2000

),

resources = builtin_digits_grammar

)

Call client stub

The app must include the location of the Nuance Mix instance, the access token, and where the audio is obtained. See Authorize.

Using this information, the app calls a client stub function or class. In some languages, this stub is defined in the generated client files: in Python it is named NRCStub, in Go it is NRCClient, and in Java it is NRCStub.

Define and call client stub:

srvaddr = sys.argv[1]

access_token = sys.argv[2]

audio_file = sys.argv[3]

. . .

call_credentials = grpc.access_token_call_credentials(access_token)

ssl_credentials = grpc.ssl_channel_credentials()

channel_credentials = grpc.composite_channel_credentials(ssl_credentials, call_credentials)

with grpc.secure_channel(srvaddr, credentials=channel_credentials) as channel:

nrc = NRCStub(channel)

response_iterator = nrc.Recognize(client_stream(audio_file))

Request recognition

After setting recognition parameters, the app sends the RecognitionRequest stream, including recognition parameters and the audio to process, to the channel and stub.

In this Python example, a two-part yield structure sends recognition parameters, then sends the audio for recognition in chunks of 160 bytes (20 ms audio chunks for 8kHz mu-law). Then mu-law audio read from the specified audio filename.

yield RecognitionRequest(recognition_init = init)

. . .

yield RecognitionRequest(audio = bytes(data))

Normally, your app will send streaming audio to Nuance Mix for processing but, for simplicity, this application simulates streaming audio by breaking up an audio file into chunks and feeding it to the recognizer.

Request recognition and simulate audio stream:

# def client_stream(filename):

try :

# Start the recognition

init = recognition_init()

yield RecognitionRequest(recognition_init = init)

packet = 0

with open(filename, "rb") as f:

while True:

data = f.read(160)

if not data:

break

else:

packet+=1

print ("audio packet " + repr(packet) + " length " + repr(len(data)))

yield RecognitionRequest(audio = bytes(data))

Process results

Finally, the app returns the results received from the NRC engine. This app simply prints the Result message containing the recognized formatted text on screen. The formatted text is in NLSML format by default (unless the EMMA format was specified in the RecognitionInit message).

audio packet 1 length 160

audio packet 2 length 160

...

audio packet 9 length 160

audio packet 10 length 160

nrc.Recognize() reponse --> status {

code: 200

message: "OK"

}

audio packet 11 length 160

audio packet 12 length 160

...

audio packet 20 length 160

audio packet 21 length 160

nrc.Recognize() reponse --> start_of_speech {

}

audio packet 22 length 160

audio packet 23 length 160

...

audio packet 141 length 160

audio packet 142 length 14

nrc.Recognize() reponse --> end_of_speech {

first_audio_to_end_of_speech_ms: 2821

}

nrc.Recognize() reponse --> result {

formatted_text: "<result><interpretation conf=\"0.91\"><text mode=\"voice\">zero one two three four</text><instance grammar=\"builtin:grammar/digits\"><SWI_meaning>01234</SWI_meaning><MEANING conf=\"0.91\">01234</MEANING><SWI_literal>zero one two three four</SWI_literal><SWI_grammarName>builtin:grammar/digits</SWI_grammarName></instance></interpretation><interpretation conf=\"0.49\"><text mode=\"voice\">zero oh one two three four</text><instance grammar=\"builtin:grammar/digits\"><SWI_meaning>001234</SWI_meaning><MEANING conf=\"0.49\">001234</MEANING><SWI_literal>zero oh one two three four</SWI_literal><SWI_grammarName>builtin:grammar/digits</SWI_grammarName></instance></interpretation></result>"

status: "SUCCESS"

}

This example shows the results from the audio file, 01234.ulaw, an 8 kHz μ-law audio file speaking the digits from 0 to 4. The audio file says:

zero one two three four

The formatted_text entry of the Result response consists in a NLSML-formatted string showing what was recognized from the audio. In this case the NLSML result contains two possible interpretations for the recognized speech, the first one with the highest confidence of 0.91.

Here is that formatted_text NLSML result (reformatted for readability):

<result>

<interpretation conf="0.91">

<text mode="voice">zero one two three four</text>

<instance grammar="builtin:grammar/digits">

<SWI_meaning>01234</SWI_meaning>

<MEANING conf="0.91">01234</MEANING>

<SWI_literal>zero one two three four</SWI_literal>

<SWI_grammarName>builtin:grammar/digits</SWI_grammarName>

</instance>

</interpretation>

<interpretation conf="0.49">

<text mode="voice">zero oh one two three four</text>

<instance grammar="builtin:grammar/digits">

<SWI_meaning>001234</SWI_meaning>

<MEANING conf="0.49">001234</MEANING>

<SWI_literal>zero oh one two three four</SWI_literal>

<SWI_grammarName>builtin:grammar/digits</SWI_grammarName>

</instance>

</interpretation>

</result>

Receive and print all response messages from the recognizer:

response_iterator = nrc.Recognize(client_stream(audio_file))

for response in response_iterator:

print("nrc.Recognize() reponse --> " + repr(response))

Feedback

Was this page helpful?

Glad to hear it! Please tell us how we can improve.

Sorry to hear that. Please tell us how we can improve.